"Over the past few months, tens of thousands of Australians have emailed their

local MP calling for a 25% tax on gas exports. More than 2,200 people have even

chipped in their own money to fund billboards promoting the idea.

Continuations 2026/23: En fuego

Continuations 2026/23: En fuegoI’ve well and truly entered release prep mode. Lots of stuff done this week, and I expect about another week or so until I can get a release candidate out the door.

My most notable accomplishment this week was writing overviews of two big features that will be landing soon: Previewing mailers in Hanami 3.0 and Previewing i18n integration in Hanami 3.0. I hope these will help our users kick the tyres — let me know how you go, I’m keen for feedback!

I upgraded decafsucks to the latest Hanami main branches and discovered that our default component memoization conflicted with the container stubbing I was using in the app. So to preserve that functionality, I disabled container memoization in the test env.

For a brief moment on Friday, I was hoping to squeeze in a “databases in Docker” default arrangement for new Hanami apps, but this turned out to be a bit too much to bite off. It’s going to need some changes to our CLI commands regarding how they handle file paths, and would most likely require binstubs for the database CLI tools that forward to their counterpart within the container. I pulled the plug for this release, but I do think it’s a nice idea, and something we could try again in the future.

This moment wasn’t for waste though, because I did get to advance my experiment with this approach in decafsucks. Now the db prepare command fully works, and I’m also using the same compose file and binstubs in CI as I do for development.

Dry Operation’s main branch CI started failing for JRuby. This turned out to be a bug in activerecord-jdbc-adapter. I worked around it in our CI, but also took the chance to file a proper issue: SQLite3 ‘new_column_from_field’ doesn’t pass ‘cast_type’ to ‘Column.new’ on ActiveRecord 8.1, leading to exceptions.

Got pissed at our RuboCop checks failing a Gemfile entry in my dry-operation workaround above and finally switched our Layout/ArgumentAlignment to the ‘with_fixed_indentation’ style. Why ‘with_first_argument’ is the default is beyond me. Who wants all those huge irregular whitespace blocks littering their code? Bad for Tetris, and bad for Ruby, at least IMHO!

Sean has been en fuego lately. This week I reviewed, from Sean: bug fixes to hanami-mailer, spec improvements to hanami-action, and a whole slate of hot path performance improvements to hanami-action. Thank you Sean! Your perf work in particular is going to be a very nice story to share for this upcoming release.

I recorded a podcast this week! Thanks to Jared for getting me back on Dead Code. It was a great chat, I can’t wait for the episode to come out.

I’ve been working through the remaining to-dos before we can be release-ready: tweaked docs about Hanami View exposures (thanks to Paweł for putting it together!), reviewed a fix to avoid duplicating routes when generating actions (very nice work from Jane Sandberg!), added a ‘generate provider’ CLI command, and generated mailers in new apps and slices.

There’s still a few small code changes to make, CHANGELOGs to prepare, docs to write, and a release candidate announcement to draft. I’m going to kick into overdrive to get as much of this done in the next ~week. I’m keen to get both the release candidate and final release done within June. We’ll see how we go!

Best of all, Max made us a very, very nice new splash screen for Hanami 3.0 apps, in keeping with our Hanakai branding. I couldn’t merge this fast enough. Thank you Max!

This is gonna be a great release! Now I just have to get it done.

So yeah, I was caught up in the Meta/Facebook layoffs in March 2026.

There are a lot of parallels to the 2001 dot com bust, and I'd like to just briefly touch on one of them now.

There's been a lot of layoffs over the last year or three in tech. I think the tech market has seen what, more than 100,000 in the last year alone? And most of those aren't due to performance. There are a lot of very smart people who've spent quite a few years learning how to build, debug and maintain all kinds of interesting stuff.

And they're now in the marketplace.

When this happened in 2000/2001, you had a whole lot of people who learned how to build internet tech and infrastructure at companies that were pushing the boundaries with things. And they were let go, due to downturns, change of focus, all kinds of reasons.

A lot of those people went off to eventually build the new stuff that likely overtook a lot of those internet and telco companies in the 90s.

I think this is going to happen with the layoffs from Meta/Facebook and other technology companies. Meta ran (runs?) a huge research arm in Reality Labs pushing the boundaries in AR, XR, wearable/portable technology. There have been plenty of public technology demonstrations showing all the stuff going on before the current AI trend.

Just over in Reality Labs - people learned how to build stuff from ASIC design up through optics, display, camera technology, highly miniaturized electronic design and power, all the fun stuff around 2D/3D audio and video stuff on wearable glasses (how do you provide head and world locked surfaces to your applications and not have it be so laggy that it gives people headaches?), making it all work over wifi, and then .. well imagine the lessons learned in what can and can't work in manufacturing the devices and where the pain points are in current technology. (And yes, a lot more I can't talk about, for hopefully obvious reasons.)

That knowledge is now embedded in a few hundred people, soon to be a few thousand people, who are being laid off. And that's just reality labs - the other people spread throughout the company and other technology companies have gathered a lot of experience about how to make and grow technology "stuff", what works and what doesn't.

At some point some of those people are going to make startups that do really amazing things. The technology underpinning a lot of what people have been trying to make now will get better (and I will argue that right now it is good enough - if you're willing to shift your focuses a little) and things will appear that will knock the socks off the current offerings. They're not what you would view as "founders" in the bay area tech scene. They're the people who know how to make things work, not necessarily the people who got rich early on in the scene.

Just like what happened after the dot com bust of 2001.

I've heard from a few people now that those they're hiring from Meta and other large technology companies are top notch and know what they're doing. You can't spend 10 years at a company that heavily invested in cutting edge R&D and not learn a thing or two.

I think its their loss, and .. eventually, everyone elses gain.

I have just bought a HP Z4 G4 with W-2125 CPU for $320 and I decided it was a good time to do some benchmarks on Debian package building to see which system I should use for that.

The W-2125 CPU scores only 9,954 on the passmark multithread test but scores 2,546 on single thread [1]. Passmark seems to have some limitations as the only DDR3 system that’s important to me at the moment (the HP Z420 workstation my parents use which cost me $750 in 2021) with a E5-2620 CPU scoring 5,325 for multithread and 1,113 for single thread [2]. From the passmark results one would expect that the system is slightly more than twice as fast as the Z420 for operations that involve less than 4 CPU cores.

For the initial tests of the Z4 G4 I ran them with hyper-threading enabled as 4 cores isn’t much by today’s standards and also the machine in question is going to be less exposed to hostile data and contain less secret data than most of my systems so the security risks of hyper-threading are less of a concern.

I did some tests with a couple of tasks that are very important to me, building SE Linux policy packages (something I may do a dozen times in a day) and building Warzone 2100 (which I do less often but is the most intensive build process I regularly run). At the bottom of this post there are tables with the results from building these packages on my Z640 workstation with a E5-2696 v4 CPU [3], the Z420, and the new machine.

For the Warzone 2100 package I tested building on my Z840 dual CPU system [4]. I didn’t test building the SE Linux policy on the Z840 this time because that package can’t take advantage of even 22 cores. When I initially got the Z840 running it built the policy packages faster because the Z640 had an older CPU that was slower for single core operations than the CPUs in the Z840.

For some time I have noticed significant differences in compile time on my workstation, a factor of more than 2. I did more tests and noticed that “top” showed something like the following, those kernel threads are all BTRFS related, except for “gfx” which is probably something graphical caused by running Chrome with about 300 tabs open.

2144316 root 20 0 0 0 0 I 26.6 0.0 0:36.76 kworker/u88:20-btrfs-endio-write 2221470 root 20 0 0 0 0 I 23.7 0.0 0:01.85 kworker/u88:12-gfx 2221436 root 20 0 0 0 0 I 15.1 0.0 0:07.48 kworker/u88:8-btrfs-compressed-write 2166191 root 20 0 0 0 0 I 12.8 0.0 0:15.80 kworker/u88:23-btrfs-compressed-write 2126387 root 20 0 0 0 0 I 10.2 0.0 1:29.11 kworker/u88:4-events_unbound

I had been running BTRFS with the mount option “compress=zstd:15” which caused much of the performance problems when building. It was also a random performance issue which I think happened due to the BTRFS 30 second write-back sometimes taking more than 30 seconds during the build process which then caused a second write-back.

I did tests on ZSTD compression levels 5, 8, 10, and 15. 15 was never good and often really bad. 10 was not unbearable but consistently slower. 8 was sometimes as fast as 5 and sometimes quite a bit slower. I didn’t test levels below 5 because I need to have some compression and it seemed that the benefits of reducing compression were dropping off below 8.

I found that the BTRFS compression delay is not counted in system time for the process. I think it’s the fsync() system calls in the semodule and dpkg-deb programs that cause the delays related to BTRFS compression waiting for kernel threads.

I have all my systems other than laptops running BOINC in the background so that CPU power is used for scientific research when I don’t have any personal use for it [5]. I believe that it’s immoral to waste CPU power when it could be used for research.

In the below table which has test results from building the package with and without BOINC, and with different ZSTD compression levels in BTRFS all the worst entries were from when BOINC was running apart from one where ZSTD level 15 compression was used. The really poor performance with ZSTD level 15 was an outlier, but it wasn’t an uncommon outlier so I left it in.

Running BOINC in the background configured to use all CPU cores caused a significant increase in “user CPU time” (the time a CPU core spent actually running the program). My initial thought was that it’s partly related to “turbo boost”.

The Intel ARK page for the CPU in the Z420 shows that it’s main clock speed is 2.0GHz with a 2.5GHz “turbo boost” [6]. The “turbo boost” is apparently largely based on temperature and apparently limited to one core, so if the other CPU cores are all being used then the CPU will probably be too hot to have the turbo boost and if it happens it might not happen for my compile processes.

The ARK page for the E5-2699 v4 (which is a similar CPU to the E5-2696 v4 that I’m using but is officially documented by Intel) [7] shows that it has a base clock speed of 2.2GHz and a turbo boost speed of 3.6 GHz. 322 vs 244 seconds of user CPU time means running 32% slower which can plausibly be explained by the lack of a 64% turbo boost with a bit of help from the 55MB L3 cache being thrashed.

Turbo boost would only be a noticeable issue for building packages like the SE Linux policy packages which doesn’t take much advantage of multi-core CPUs. For a build process to average at best 362% CPU use there has to be large parts of the process that are limited to one or two cores which can potentially give a benefit from turbo-boost.

When building the Warzone 2100 packages most of the build time is running basis-universal which is a multi-threaded program to compress GPU texture data. This usually causes a load average of 300+ on the Z640 or 600+ on the Z840. But the build time is still increased by more than 50% on both the Z640 and the Z840 when BOINC is running in the background, which seems to be an indication that it’s not related to turbo boost. I verified that BOINC is running at IDLE schedule priority with the following command:

# chrt -p $(pidof -s einstein_O4MD_2.01_x86_64-pc-linux-gnu) pid 2974874's current scheduling policy: SCHED_IDLE pid 2974874's current scheduling priority: 0

In theory this means that BOINC won’t affect foreground processes.

The best claims I’ve seen about HT are 15% to 30% performance boost. The best I’ve actually seen in the past is about 18%. Seeing a 10% benefit for building Warzone 2100 is at the low end of the range I expected. 8 virtual cores is not many for a build process that causes a load average of 600+ when running on a system with 44 real cores.

I was surprised to see a 6% performance benefit in hyper-threading for building the SE Linux policy as I didn’t think there was enough use of threading or multiple processes to allow that.

Many build scripts use a number of processes that match the number of apparent CPU cores. While “make -j 88” might give a theoretical performance benefit on a 44 core system it will also take a lot of RAM and any paging will outweigh the benefits of hyper-threading. On a system with only 4 real cores there’s less potential for using too much RAM and as security isn’t so important on that system I will leave it on.

The best results of the Z640 and Z4G4 are only 50% faster than the best results of the Z420.

The Z420 has a E5-2620 CPU which is far from the fastest CPU available for that system – the E5-2687W has 8 cores and rates 10,021/1,669 on passmark [8] which is far better than the 5,331/1,114 the E5-2620. The E5-2687W is the fastest CPU that HP lists as supported by the Z420 and it supports DDR3-1666 RAM as opposed to the DDR3-1333 that is the fastest that the E5-2620 supports. With suitable hardware upgrades the Z420 would probably only take about 20% longer to do builds of the SE Linux policy and other packages that can’t take advantage of more than 8 CPU cores.

The Z4G4 system has 4 RAM channels which means that you should get some performance benefits from having 4 DIMMs, my system currently has 2 and I haven’t yet managed to get more DDR4-2666 DIMMs. But I’d still expected a W-2125 CPU with 2*DDR4-2666 DIMMs outperform any E5-26xx CPU with 4*DDR4-DDR-2400 DIMMs for tasks that average less than 4 CPU cores.

In retrospect I would have been better off getting a HP Z820 (two socket server with DDR3 RAM) than the first DDR4 systems I got. It seems that for reasonable size builds a two socket system comes close to twice the speed of a single socket system. I did briefly own a HP ML350 two CPU system with DDR3 RAM but it was too noisy for my intended use as a deskside workstation so I sold it.

I plan to do more investigation on BTRFS compression, how to get the best compression without excessive delays and how to recognise when delays are happening. I have some SSDs that have sustained write speeds as low as 15MB/s (Crucial P1 series) so for those I could probably have very high compression levels without slowing the system down.

The fact that BIONC slows things down so much seems to be a bug. When processes are running with the IDLE scheduling class there shouldn’t be such significant delays. Is it due to cache thrashing? How can I best get BOINC suitably throttled when I’m sitting at my workstation, I don’t want BOINC connecting to the local X server (which it repeatedly tries to do). Do I need to tune my kernel for better handling of IDLE scheduling?

When I get more DIMMs in the Z4G4 I need to do more tests to see if it gives an overall performance boost.

Also the Z4G4 system has a BIOS option for “sub NUMA” which basically means treating the different RAM channels on a single CPU as NUMA zones, I enabled that option which does nothing presumably because I only have 2 DIMMs, the results when I have 4 DIMMs will be interesting. I will also do some NUMA tests on the Z840 to see what benefits it gives.

I have a selection of RAM speeds that will work in the Z4G4, if I have enough spare time I’ll test what difference that makes for CPU bound tasks that matter to me.

For package building fsync() is not helpful, if the system crashes before it’s done then I will just do the build again. For a build cluster it is probably a good feature and probably doesn’t affect aggregate performance when multiple packages are built at the same time, but for the single user case probably not. I will investigate libeatmydata for package building [9].

The progress in CPUs seems to have slowed down a lot recently. The main benefits seem to be in more CPU cores and for newer sockets with more RAM channels.

The CPUs that do have improvements in single core performance are the i9 series (which mostly doesn’t come with motherboards supporting ECC) and AMD CPUs (which is rare in enterprise class hardware). Maybe I should get a server with an i9 or AMD CPU for tasks that need a fast turn around with a small number of cores. That would probably outperform any CPU designed for large core counts for things like building the policy and setting up test VMs (which depends on package installation speed that is single core bottlenecked).

The W-21xx CPUs seem to offer little benefit over the E5-26xxv4 CPUs and not a lot of benefit over E5-26xx CPUs (with DDR3). Even the W-22xx CPUs look like they aren’t going to offer a lot as they are only an incremental improvement over the W-21xx series. I had considered making the Z4G4 my main desktop workstation after the high end W CPUs become affordable, but it looks like that won’t be worth it until such CPUs drop from the current ebay price of $900 to $100.

I think I’ll keep waiting for a decent socket LGA3647 or DDR5 based server [10] for my next significant upgrade.

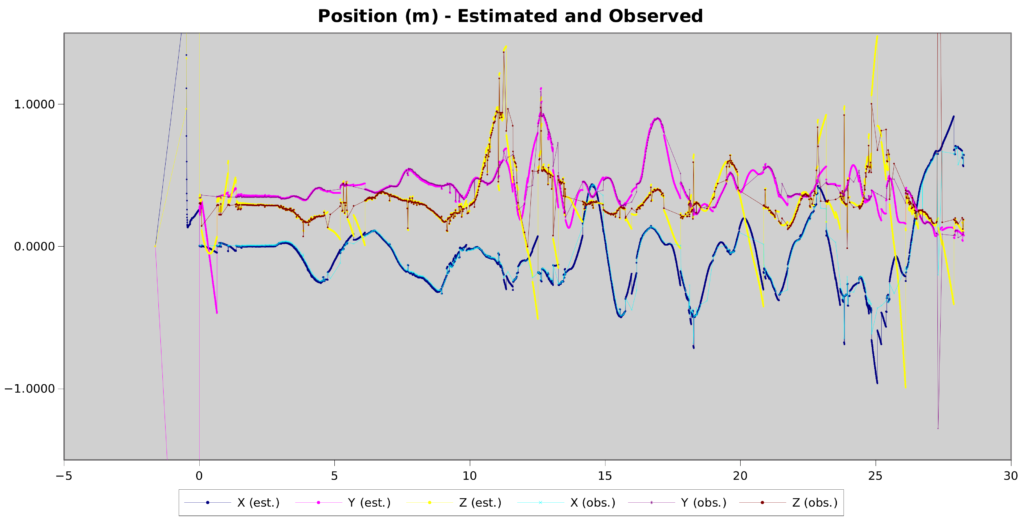

| System | BOINC | Compression | CPU Time | Elapsed | CPU% |

|---|---|---|---|---|---|

| Z640 | no | 8 | 248.82user 55.58system | 1:23.88elapsed | 362%CPU |

| Z4G4 | no | 5 | 245.15user 34.63system | 1:24.93elapsed | 329%CPU |

| Z640 | no | 5 | 244.75user 34.87system | 1:25.98elapsed | 325%CPU |

| Z4G4 | no | 10 | 245.21user 35.64system | 1:29.63elapsed | 313%CPU |

| Z640 | no | 8 | 248.71user 55.90system | 1:33.01elapsed | 327%CPU |

| Z640 | no | 10 | 250.90user 55.78system | 1:42.12elapsed | 300%CPU |

| Z640 | yes | 8 | 298.19user 69.30system | 1:59.77elapsed | 306%CPU |

| Z640 | yes | 10 | 300.58user 68.90system | 2:01.53elapsed | 304%CPU |

| Z420 | no | 5 | 359.01user 44.95system | 2:07.33elapsed | 317%CPU |

| Z640 | yes | 5 | 322.40user 71.82system | 2:34.66elapsed | 254%CPU |

| Z420 | yes | 5 | 372.03user 42.95system | 2:42.15elapsed | 255%CPU |

| Z640 | yes | 15 | 299.26user 67.18system | 2:59.77elapsed | 203%CPU |

| Z640 | no | 15 | 250.05user 54.60system | 3:07.61elapsed | 162%CPU |

| System | BOINC | Compression | CPU Time | Elapsed | CPU% |

|---|---|---|---|---|---|

| Z840 | no | 10 | 6549.21user 89.46system | 4:18.90elapsed | 2564%CPU |

| Z840 | no | 5 | 6533.81user 90.50system | 4:19.24elapsed | 2555%CPU |

| Z640 | no | 5 | 7040.87user 183.12system | 7:13.50elapsed | 1666%CPU |

| Z840 | yes | 5 | 8039.52user 169.62system | 8:02.86elapsed | 1700%CPU |

| Z640 | yes | 5 | 7486.44user 205.03system | 11:09.97elapsed | 1148%CPU |

| Z4G4 | no | 5 | 7891.32user 74.45system | 17:48.03elapsed | 745%CPU |

| Z4G4 | no | 10 | 7942.10user 77.43system | 17:58.72elapsed | 743%CPU |

| Build | HT | Compression | CPU Time | Elapsed | CPU% |

|---|---|---|---|---|---|

| Warzone | yes | 5 | 7891.32user 74.45system | 17:48.03elapsed | 745%CPU |

| Warzone | yes | 10 | 7942.10user 77.43system | 17:58.72elapsed | 743%CPU |

| Warzone | no | 5 | 4492.45user 59.09system | 19:59.01elapsed | 379%CPU |

| Warzone | no | 10 | 4497.28user 59.46system | 20:07.15elapsed | 377%CPU |

| Refpolicy | yes | 5 | 245.15user 34.63system | 1:24.93elapsed | 329%CPU |

| Refpolicy | yes | 10 | 245.21user 35.64system | 1:29.63elapsed | 313%CPU |

| Refpolicy | no | 5 | 180.84user 29.74system | 1:32.30elapsed | 228%CPU |

| Refpolicy | no | 10 | 180.29user 30.07system | 1:35.01elapsed | 221%CPU |

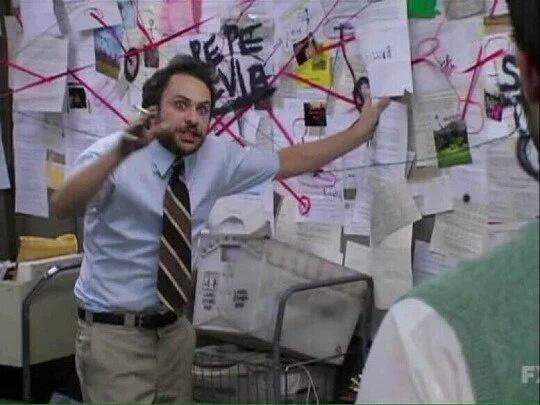

The tech industry is going through massive upheaval. Roles are disappearing, engineers are being laid off by the thousands. Companies are discovering they no longer have a business model, and the global economy is desperately trying to both absorb the impact and understand the implications of what’s going on.

This was the industry 25 years ago, back when I was first getting started in tech around the time of the dotcom crash.

Less than 10 years later, it was happening again: the iPhone had launched, so we’d figured out how to make mobile sites. Then the App Store launched, so we had to figure out how to build apps, instead. The development landscape was changing faster than any one person could keep up: how could you possibly be a good developer now?

Even back then, writing the cleverest, or neatest, or fastest code was only a part of being a good developer. The industry was rapidly iterating on development practices and developer tooling: you didn’t write code in notepad.exe, you used a code editor, or perhaps even an IDE. You didn’t copy files into production, you used version control. These tools had existed in the corporate world for years, of course, but open source alternatives were making them available to anyone who wanted to be a a developer. They improved, too: adding auto-complete, templates, and integrating test environments for rapid iteration.

The democratisation continued, too: from the rise of Stack Overflow as a source of code snippets that you could easily re-use, to package managers made it even easier to re-use code on an industrial scale, through to the proliferation of through to the proliferation of transpilers and build tools. It got to a point where it where didn’t even really matter what language you wrote your code in. As long as you had the right build tool for it, it could run anywhere.

The history of our industry has been defined by a never-ending drive to increase the leverage of every keystroke, but it’s always been at something of a measured pace. But what happens when a giant leap occurs?

A couple of year couple of years ago, LLM-based development tools were little more than slightly smarter (but infinitely slower) autocomplete, and it looked like they’d stalled there. A year ago, agentic development tools came along, and it was starting to look like there might actually be something useful here, though they were far too prone to making unacceptable mistakes. More recently, the current generation of agentic tools emerged, and we find ourselves able to generate production-ready code with minimal developer intervention.

So if we no longer need to write code, what becomes of the software developer?

Hint: you’re still coding, just at a a different abstraction level. Your job has always been to take a problem, and solve it with software. The exact method you used to produce that software has never been prescribed, you use the right tool for the job.

Right now, the tools are pretty messy. Concepts are changing week to week, state of the art last quarter is today’s legacy. I’m reminded of when the mobile web was first emerging, the horrible CSS hacks we’d previously reserved for Internet Explorer now had to be applied to make things work in mobile browsers, too. When we first started building mobile websites, they were entirely different code bases, but we slowly figured out how to make the same code work everywhere. The change came as a hurricane, the tooling followed later, cleaning up the mess.

Once again, the tech industry is in a state of upheaval. The good news is, the exact same mindset that made you a good developer previously is what will keep you being a good developer today. Some of the bad habits we picked up along the way might have to go, though.

You can’t just look at the code in front of you any longer. Closing out your 5 tickets for the sprint won’t help you use agentic tools: it’s impossible to stick to your little corner of the machine if the tooling is suddenly able to reach everywhere. You need to learn how the entire system works: not necessarily so you can create code that spaghetti-fies its way through the system, but so you can know when it’s right to make changes elsewhere.

Think about the tools that always made it easiest for you to create good code. From the simplest code formatter to the most comprehensive test suite: these tools gave you fast, reliable, timely, and actionable feedback that you can act upon. The exact same tooling is what will give you the best output from agentic development tools today.

The same is true of process. Well-written, unambiguous requirements produced in a collaborative, iterative fashion creates clear guidelines for what needs to be built creates clear guidelines for what needs to be built. Useful code reviews that helped you learn more about the system you’re building inside of ensures you’ve built it the right way ensures you’ve built it the right way. Comprehensive real world testing with clear, actionable feedback checks that you’ve solved the problem.

Creating great software was never about being the most brilliant coder, it’s always been about creating an environment where great software comes naturally: that’s as true today as it was yesterday.

Information sharing has always been a key driver in pushing the software engineering practices forward. From the global Open Source movement right down to how your team works together, sharing compounds exponentially. Where one developer with a well-configured CI pipeline raises the quality of their own output, an entire team on the same pipeline raises the floor for everyone.

Start with shared guardrails. If you’ve set up a test coverage ratchet or a linter config that’s been improving your own work, propose it to the team. Champion it in a PR, explain why it caught something it would otherwise have missed, and make it part of your shared CI standard. Remember that you’re not trying to impose process, you’re aiming to help the team’s agents work to the same standard as yours. When everyone’s feedback loop is well-configured, the whole team moves faster with less rework.

Work on shared review culture. In code reviews, start asking questions like “what guardrails did you put in place?” and “how did you verify the agent’s output?” You’re not looking for gotchas: be genuinely curious, and find the patterns that can help evolve the tooling everyone uses.

Talk about what “done” looks like. Define acceptance criteria and type checks that everyone agrees on, so that agents across the team are working to the same standard, instead of individual interpretations of what’s good enough. Always remember that you’re not looking to create bureaucracy, you’re defining alignment: when developers and agents share a common north star, when you’re pulling in the same direction, the job becomes easier and you can move onto new and bigger opportunities.

There are big changes happening in the industry right now, the practice of software engineering is evolving to encompass agentic development tools. Your choice remains, however: just as you could always choose whether you prefer to use a full IDE, a code editor, or notepad.exe. Now you can choose to use the frontier LLMs, you can choose self-hosted LLMs. You can use the myriad of available harnesses, you can write something that works just for you. Previous generations of software engineering practices didn’t disappear because something new came out, they adapted to work with the best bits, and kept the rest optional.

This is a time of exciting and challenging opportunities: just as has happened before, we’re defining what the practice looks like for the coming decades. Start with something small, perhaps have a chat with your lead about what their view is, I promise they’re trying to figure out what it all means just as much as you are! Now is a good time to take care of technical debt: agents work best in a consistent environment, so cleaning up some of those old abstractions and interfaces will help increase the momentum. Keep learning: my years in this industry has taught me that nothing ever stays the same, there’s only one way to continue improving your craft.

Feed your curiosity.

Continuations 2026/22: Integrated mailers

Continuations 2026/22: Integrated mailersThis week I got the last big piece done before we can make the next Hanami release. Mailers now fully integrate into Hanami apps, with zero necessary boilerplate, just like all our other essential components.

This integration piece was the first real usage test of Hanami Mailer itself, and it drove a couple little improvements: keeping test delivery state at the instance-level, and allowing for a configurable view class for each mailer.

With that done, there’s not a whole lot left before we can ship a release candidate! I’m hoping to get to that in the next ~7-10 days. I want to get some decent docs sorted first, so folks can easily test out all the new things.

With release prep in mind, I also spent some of Friday (which may or may not have been during Game 6 of the Western Conference Finals, #GoSpursGo!) putting together tooling to do bulk review and triage of our repo changelog files.

On Friday I also had the pleasure of announcing SerpApi as Hanakai’s newest sponsor! Thank you, SerpApi! 💛 Financial support like this is how we keep making Hanakai better!

Buoyed by this, I took the chance to put some more work into our sponsorship program, contacting all our sponsors who’re nearing their first year of support. I’m looking forward to doing another small “sponsorship drive� this year, but I think that will need to wait until our Hanami release is properly out. I don’t have the capacity to do the both at once.

Other code stuff done this week: I removed some legacy params logic from Hanami Action, and reviewed Sean bringing another nice perf post to Hanami Action, and Paweł bringing some serenity to production logs by tweaking our default log levels.

Continuations 2026/21: Big cake to walk

Continuations 2026/21: Big cake to walkI finished off Hanami’s streamlined i18n support this week! I had built the core functionality quite a while ago, and thought that adding view helpers would be a cakewalk. In the end, this turned out to be another 80% after the first 80%.

So this week, I: reimplemented I18n’s #localize so it can respect slice isolation, bundled English language defaults available via a new shared_load_path setting, added i18n view helpers, tracked the currently-rendering template in Hanami View, used this to add relative i18n key lookup in view helpers, added i18n helpers to actions as well, and then finally, added i18n to the Gemfile and generated placeholder en.yml files in new apps. That’s one big cake to walk, but I hope it will be a delicious end result.

Paweł merged a setting to configure the log level for Hanami’s DB logs, and we hashed out a plan to keep the production logs leaner: by default, DB logs will have the :debug severity level, while in production, the app logger will log at :info level only.

Some nice Dry CLI movement this week: I merged the nicer `example` DSL that Aaron and I built together, and Paweł merged typecasting support. It feels like we’re not far off being able to ship v2.0 of this gem.

I merged a tiny fix to Hanami RSpec to prevent factory leakage across tests.

Nikita became the third of our team to ship a new gem release via Release Machine! This time it was Dry AutoInject v1.2.0, which comes with two brand new RuboCop cops to keep your injector mixins consistent, and one extremely gnarly bugfix from Adam. This was a great release!

Amid all of this, I even found some time to work on my own personal Hanami app. Now we have two fully working “write a review� flows for Decaf Sucks, for a new cafe as well as for an existing cafe. I’m thinking that making this app feature complete and ready-to-deploy might be a good project for Ruby Retreat next month 😊

We’re on the home straight for our next Hanami release. In the next week or so, I’m going to try and knock off all the remaining little issues, and get as much new documentation written as I can. After that, 3.0.0.rc1.

Ron Garrett wrote an interesting blog post about the mathematical possibility of abiogenesis [1].

The Register has an informative article about the threat that management systems built in to Intel and AMD CPUs pose to data sovereignty in EU owned cloud providers [4]. But this is just the first stage of building sovereign clouds, all significaant cloud services run at least 2 types of CPU and adding EU manufactured CPUs at a future time will be easy.

Michael Prokop wrote an interesting blog post about debugging input event problems on Linux which turned out to be due to an analogue headphone connection [8]. This gave me some useful pointers to investigating an input device problem which is probably very different.

Tianon Gravi wrote an informative blog post about containers, Debian, and Docker options [12]. We need a lot more work on these sorts of things in Debian.

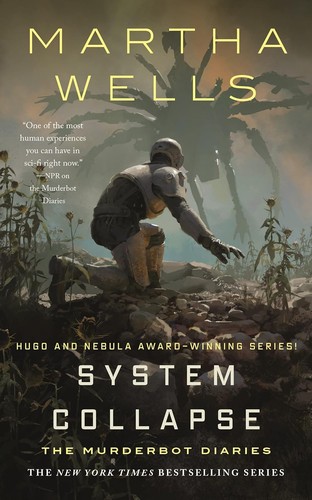

System Collapse

System Collapse

Once again a relatively short but enjoyable Murderbot book. I think its endearing that Murderbot continues to develop as a character, even if some of the tropes are feeling a little worn around the edges. Yes they get injured, yes they do some combat, yes they’re sarcastic. On the other hand I think this story line is both unique compared to the previous ones, and builds reasonably upon the previous book. Honestly though, this and the previous book probably should have been one volume.

Last year I blogged about using Zram for VMs [1]. That setup is still working well for VMs and for phones and laptops with no swap device.

I have just read Chris Down’s insightful blog post about Zswap vs Zram [2] which convinced me to setup Zswap on some systems. I have had some of the problems that were described in his blog post when trying to run Zram on workstation and server systems.

One limitation of zswap is that it doesn’t allow specifying the compression level. For zram I can put the following in /etc/systemd/zram-generator.conf to set the zstd compression level (this works well on my Thinkpad X1 Carbon Gen6):

[zram0] compression-algorithm=zstd(level=10)

For the BTRFS filesystem I can put “compress=zstd:13” in the mount options to specify the compression level. They really should support different compression levels in zswap. The ideal compression level depends on the speed of the CPU and new CPUs keep getting faster.

The documentation says to use something like the following on the kernel command-line to enable zswap:

zswap.enabled=1 zswap.compressor=zstd zswap.max_pool_percent=20 zswap.shrinker_enabled=1

The max_pool_percent=20 setting is the default which means to use up to 20% of system RAM for compressed data. I’ve seen documentation sugesting up to 50% which seems a little excessive.

Note that a lot of documentation says to use zswap.zpool=z3fold, but z3fold is going to be removed and zsmalloc (the default) is recommended [3].

There is documentation about changing the compression algorithm via command line parameters, on Debian only lzo is linked in to the kernel and zstd (my preferred option) is a module so the kernel command line can’t be used to set zstd, but the following command works:

echo zstd > /sys/module/zswap/parameters/compressor

The shrinker_enabled option is to allow the kernel to evict cold pages without waiting for memory pressure.

You can enable zswap without rebooting by running commands like the following. You could even put them in /etc/rc.local or something, but I think putting it in the kernel command line is a good idea as it makes it obvious to the next sysadmin what is happening.

echo 1 > /sys/module/zswap/parameters/enabled echo zstd > /sys/module/zswap/parameters/compressor echo 1 > /sys/module/zswap/parameters/shrinker_enabled

The following command is documented as a way of finding out what zswap is doing:

# grep -r . /sys/kernel/debug/zswap/ /sys/kernel/debug/zswap/stored_pages:262541 /sys/kernel/debug/zswap/pool_total_size:455266304 /sys/kernel/debug/zswap/written_back_pages:384 /sys/kernel/debug/zswap/reject_compress_poor:0 /sys/kernel/debug/zswap/reject_compress_fail:160911 /sys/kernel/debug/zswap/reject_kmemcache_fail:0 /sys/kernel/debug/zswap/reject_alloc_fail:0 /sys/kernel/debug/zswap/reject_reclaim_fail:0 /sys/kernel/debug/zswap/pool_limit_hit:0

The following command gives the zswap compression level which gives a result of 2.36 for this example:

echo "scale=2; " $(</sys/kernel/debug/zswap/stored_pages) " * $(getconf PAGESIZE) /" $(</sys/kernel/debug/zswap/pool_total_size) | bc

This table documents my current understanding of the debug values. The difference between reject_compress_fail and reject_compress_poor isn’t clear in a lot of the documentation, even reading the source didn’t make it easy to understand.

| File | Meaning (LC is lifetime count) |

|---|---|

| pool_limit_hit | LC pool limit hit and pages are forced to the swap partition |

| pool_total_size | RAM used for zswap data |

| reject_alloc_fail | LC can’t allocate memory because max_pool_percent has been reached |

| reject_compress_fail | LC of pages with a compression algorithm failure so go straight to swap partition |

| reject_compress_poor | LC of pages that can’t compress so go straight to swap partition |

| reject_kmemcache_fail | LC kernel malloc failure (serious problem?) |

| reject_reclaim_fail | LC failure to move a page from compressed RAM to disk – serious problem! |

| stored_pages | Swapped pages stored by zswap |

| written_back_pages | LC of pages written back to swap partition from zswap |

All of this is not nearly as easy to understand as the following command for zram:

# zramctl NAME ALGORITHM DISKSIZE DATA COMPR TOTAL STREAMS MOUNTPOINT /dev/zram0 zstd 7.7G 2.1G 375M 386M 4 [SWAP]

The Debian Wiki page about Zswap is very brief [4] and needs more description about this, I think a lot of Debian users will use zram instead of zswap because setting up zram is just a single apt command. I’m not planning to immediately add to that wiki page because I’m not an expert on this, I would appreciate comments on this blog post from others who have got zswap working. I will update the wiki if others report matching experiences to mine.

I’m now using zswap on a few systems including my main home workstation which had performed poorly with zram and a swap device in the past. If that goes well I’ll put it on other systems.

I wrote the following shell script to display zswap stats, consider it GPL if you want to use it:

#!/bin/bash if [ ! -f /sys/kernel/debug/zswap/stored_pages ]; then echo "ZSwap not enabled" exit 0 fi PAGES=$(</sys/kernel/debug/zswap/stored_pages) PAGESIZE=$(getconf PAGESIZE) RAM=$(echo "$PAGESIZE * " $(getconf _PHYS_PAGES) | bc) POOL=$(</sys/kernel/debug/zswap/pool_total_size) if [ "$POOL" == "0" ]; then echo "ZSwap not used yet" exit 0 fi COMP=$(</sys/module/zswap/parameters/compressor) echo -n "$COMP compression ratio: " echo "scale=2; $PAGES * $PAGESIZE / $POOL" | bc echo -n "RAM%: " echo "100 * $POOL / $RAM" | bc

We have a new Linux exploit called PinTheft [1]. I did some tests of it with Debian kernel 6.12.74+deb13+1-amd64.

When I run the exploit as user_t I see the following in the audit log:

type=PROCTITLE msg=audit(1779615031.043:15540): proctitle="./exp"

type=AVC msg=audit(1779615031.043:15541): avc: denied { create } for pid=1360 comm="exp" scontext=user_u:user_r:user_t:s0 tcontext=user_u:user_r:user_t:s0 tclass=rds_socket permissive=0

type=SYSCALL msg=audit(1779615031.043:15541): arch=c000003e syscall=41 success=no exit=-13 a0=15 a1=5 a2=0 a3=0 items=0 ppid=879 pid=1360 auid=1000 uid=1000 gid=1000 euid=1000 suid=1000 fsuid=1000 egid=1000 sgid=1000 fsgid=1000 tty=pts0 ses=1 comm="exp" exe="/home/test/b/pocs/pintheft/exp" subj=user_u:user_r:user_t:s0 key=(null)ARCH=x86_64 SYSCALL=socket AUID="test" UID="test" GID="test" EUID="test" SUID="test" FSUID="test" EGID="test" SGID="test" FSGID="test"

The last of the output of running the exploit is the following:

[-] only stole 0/1024 refs — may not be enough [-] too few stolen refs, aborting [-] attempt 5 failed, retrying... [-] all 5 attempts failed

When I run it as unconfined_t it gave the same output and stracing it had many of the following:

socket(AF_RDS, SOCK_SEQPACKET, 0) = -1 EAFNOSUPPORT (Address family not supported by protocol)

After I ran “modprobe rds” the exploit worked as unconfined_t with the following output:

[*] verifying page cache overwrite... [*] page cache page 0 AFTER overwrite (our shellcode) (129 bytes): 0000: 7f 45 4c 46 02 01 01 00 00 00 00 00 00 00 00 00 |.ELF............| 0010: 03 00 3e 00 01 00 00 00 68 00 00 00 00 00 00 00 |..>.....h.......| 0020: 38 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 |8...............| 0030: 00 00 00 00 40 00 38 00 01 00 00 00 05 00 00 00 |....@.8.........| 0040: 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 |................| 0050: 2f 62 69 6e 2f 73 68 00 81 00 00 00 00 00 00 00 |/bin/sh.........| 0060: 81 00 00 00 00 00 00 00 31 ff b0 69 0f 05 48 8d |........1..i..H.| 0070: 3d db ff ff ff 6a 00 57 48 89 e6 31 d2 b0 3b 0f |=....j.WH..1..;.| 0080: 05 |.| [+] verification PASSED — page cache overwritten with SHELL_ELF [+] executing /usr/bin/su (now contains setuid(0) + execve /bin/sh)... === RESTORE: sudo cp /tmp/.backup_su_13294 /usr/bin/su && sudo chmod u+s /usr/bin/su === #

SE Linux in a “strict” configuration stops this exploit.

The test VM is running Debian/Testing, I haven’t bothered investigating whether it’s a default setting for Debian to not load the rds module or whether it was some change that I made either directly or indirectly. Security via SE Linux is of more interest to me than security via controlling module load.

Continuations 2026/20: Repeated reads

Continuations 2026/20: Repeated readsA proud moment this week! After Sean made a nice performance related enhancement to Dry Configurable (now you can call #to_data on your config to get a data object that’s optimised for fast repeated reads), he became the first of my teammates to cut a new gem release via Release Machine! Thank you Sean, I’m looking forward to more of this in the future. 😃

This was another week of PR reviews. Of note: improving Hanami View performance by using the new Dry Configurable data object (thanks Sean!), a Dry Types extension for Dry CLI (thanks Paweł!), making Dry Initializer usable from non-main Ractors (thanks Nikita!), a fix for array variables in Hanami Router route expansions (thanks Edouard!), and a fix for Hanami Router’s form body parsing (thanks again Sean!).

I put together another nice enhancement to Dry Operation, making it possible to give names to steps, which are then surfaced to our on_failure hook, making it easier for you to different step failures in particular ways. This was thanks to an issue filed against our docs, which (incorrectly) advertised this capability even before it existed. I fixed the docs, but I figured why not make the gem better too!

I merged the Hanami Assets bundler specification that I prepared a few weeks ago so I could share it in this forum discussion about third-party bundlers. And within a day, Wout already improved bun_bun_bundle’s Hanami compatibility! Thanks Wout! I’m looking forward to this being the first of many steps to support pluggable bundlers while still preserving a complete and consistent experience for our users.

After all of that, there wasn’t much time left for building out the final steps for our i18n support. I’m part way through shipping a fix to how #localize works within our per-slice isolated I18n backends (turns out the I18n gem loves it some global state). Once this is done it should hopefully be straightforward to finish off the other parts, including shared translation files and view helpers. More on that (hopefully) next week!

I just tested out the ssh-keysign-pwn exploit [1] on Debian kernel 6.12.74+deb13+1-amd64 which was released before these exploits.

When sshkeysign_pwn is run as user_t the following is logged in the audit log and it fails to exploit anything:

type=SYSCALL msg=audit(1778831599.951:22353257): arch=c000003e syscall=438 success=no exit=-1 a0=3 a1=c a2=0 a3=1b8020 items=0 ppid=5632 pid=6654 auid=1000 uid=1000 gid=1000 euid=1000 suid=1000 fsuid=1000 egid=1000 sgid=1000 fsgid=1000 tty=pts0 ses=144 comm="sshkeysign_pwn" exe="/home/test/a/ssh-keysign-pwn/sshkeysign_pwn" subj=user_u:user_r:user_t:s0 key=(null)ARCH=x86_64 SYSCALL=pidfd_getfd AUID="test" UID="test" GID="test" EUID="test" SUID="test" FSUID="test" EGID="test" SGID="test" FSGID="test"

type=PROCTITLE msg=audit(1778831599.951:22353257): proctitle="./sshkeysign_pwn"

type=AVC msg=audit(1778831599.951:22353258): avc: denied { ptrace } for pid=6654 comm="sshkeysign_pwn" scontext=user_u:user_r:user_t:s0 tcontext=user_u:user_r:user_t:s0 tclass=process permissive=0

When it is run as unconfined_t the contents of the /etc/ssh/ssh_host_ecdsa_key file are correctly displayed on standard out in about 10ms, the file in question is only readable by root and a non-root user can use this exploit to read it.

It wouldn’t be uncommon to have a system configured to allow users to trace their own processes. The following policy addition grants access for the user to trace their own processes:

allow user_t self:process ptrace;

With that in place the sshkeysign_pwn exploit still doesn’t work and there are logs like the following:

type=AVC msg=audit(1778833455.726:57355191): avc: denied { read } for pid=6941 comm="ssh-keysign" name="ssh_host_rsa_key" dev="vda" ino=15492 scontext=user_u:user_r:user_t:s0 tcontext=system_u:object_r:sshd_key_t:s0 tclass=file permissive=0

type=SYSCALL msg=audit(1778833455.726:57355191): arch=c000003e syscall=257 success=no exit=-13 a0=ffffffffffffff9c a1=55eadec43061 a2=0 a3=0 items=0 ppid=6933 pid=6941 auid=1000 uid=1000 gid=1000 euid=0 suid=0 fsuid=0 egid=1000 sgid=1000 fsgid=1000 tty=pts0 ses=144 comm="ssh-keysign" exe="/usr/lib/openssh/ssh-keysign" subj=user_u:user_r:user_t:s0 key=(null)ARCH=x86_64 SYSCALL=openat AUID="test" UID="test" GID="test" EUID="root" SUID="root" FSUID="root" EGID="test" SGID="test" FSGID="test"

So if you could find some secret data in a file that’s only restricted by Unix permissions and user_t is granted ptrace access then a variant of that exploit could work.

When user_t is allowed ptrace access the chage_pwn exploit fails with the following log entries, so any binary that runs in a different domain can’t be used in that situation.

type=AVC msg=audit(1778833908.020:57434896): avc: denied { ptrace } for pid=7037 comm="chage_pwn" scontext=user_u:user_r:user_t:s0 tcontext=user_u:user_r:passwd_t:s0 tclass=process permissive=0

type=SYSCALL msg=audit(1778833908.020:57434896): arch=c000003e syscall=438 success=no exit=-1 a0=3 a1=5 a2=0 a3=1b7e00000000 items=0 ppid=5632 pid=7037 auid=1000 uid=1000 gid=1000 euid=1000 suid=1000 fsuid=1000 egid=1000 sgid=1000 fsgid=1000 tty=pts0 ses=144 comm="chage_pwn" exe="/home/test/a/ssh-keysign-pwn/chage_pwn" subj=user_u:user_r:user_t:s0 key=(null)ARCH=x86_64 SYSCALL=pidfd_getfd AUID="test" UID="test" GID="test" EUID="test" SUID="test" FSUID="test" EGID="test" SGID="test" FSGID="test"

In a “strict” configuration with users having the user_t domain a Debian system is not vulnerable to these exploits unless there is some configuration error or some unusual configuration choices. Users with the unconfined_t domain can successfully run the exploits.

Continuations 2026/19: Ebbs and flows

Continuations 2026/19: Ebbs and flowsOne thing I’ve seen in my time on these projects is that the extra help comes in ebbs and flows. In this last little while, we’ve seen some good flow, so most of my time this week was reviewing work from the team.

Here’s some of what I reviewed, all really big, notable things: Prevent parent-class injection from discarding pass-through args, a fantastic, hard won fix in Dry AutoInject from Adam; Add dependency order cop, some helpful dev tooling for AutoInject from Nikita (it’s so great to see him back!); Add Config#to_data to allow faster reads in Dry Configurable, a very nice performance bump from Sean; a Dry Types extension for Dry CLI from Paweł; Include rouge gem in Gemfile for new apps, allowing upcoming Hanami apps to have SQL syntax highlighting from Kyle; Refactor ‘example’ DSL for better consistency and clarity, a genuine quality of life improvement for our v2 of Dry CLI, from Aaron. Thank you everyone for your work!

Earlier in the week I did some of my own work to get Hanami Assets JS into shape for the upcoming release. It will now update for changes to static files in watch mode, detect newly added entry points in watch mode, and also skip over junk files like .DS_Store when bundling assets. Also some housekeeping: bumped our required Node version, switched to Vitest, and set up a dedicated release-machine workflow for this as our solitary Node package!

I finished by writing up a spec document for how asset bundlers should behave to conform with Hanami Assets. This will hopefully help make things clearer for third-party bundlers that want to work with our asset structure and manifest format.

With my asset ducks in a row, I’ve now turned to the next steps for our new i18n support. Nothing quite ready to show yet, but I’ve been working on making it possible to share common translations across slices, fixing another issue with the i18n gem not playing nicely with our per-slice isolations, and setting up view helpers for i18n. Probably another week or more until this is all in good shape. More on that soon!

The Man Who Knew the Way to the Moon by Todd Zwillich

The story of John C. Houbolt, a NASA engineer who pushed for Lunar Orbit Rendezvous for the Apollo program. Just 3 hours long but interesting. 3/5

Who Owns This Sentence: A History of Copyrights and Wrongs by David Bellos & Alexandre Montagu

A look at the almost random ways and reasons copyright has changed over the centuries usually as different groups lobbied governments. 3/5

The Six: The Untold Story of America’s First Women Astronauts by Loren Grush

A fairly balanced biography of the 6 astronauts. Covering before and during the Astronaut careers and to an extent afterwards. Worth a read for space fans. 4/5

My Audiobook Scoring System

I got brave this morning and made the first public release of instar, version 0.2. Here’s a snippet from what I sent to the openstack-discuss mailing list about this:

Instar is a from scratch rethink of how to handle untrusted image data as well as an opportunity for me to become more familiar with code generation LLMs. All data processing happens in a custom KVM guest which does not run an operating system or have any access to system resources apart from those provided to it by the custom VMM which orchestrates it. The best way to think of this guest is as an embedded system running on a virtual CPU. This is similar in approach to how AWS Nitro Enclaves work in terms of prior art, whilst also being the opposite in intent: Nitro protects sensitive data from the cloud; instar protects the cloud from malicious data. To be clear, `instar info` doesn’t detect or block malicious images — it processes them safely and reports what it finds. The security comes from containment, not detection. It is then up to the caller to decide how to handle what is reported.

You can read more about instar on shakenfist.com, or the full openstack-discuss post.

I have done some packaging work on Amazfish (the smart-watch software that works with the PineTime among others) for Debian. Here is my Git repository for libnemodbus (a dependency for Amazfish that isn’t in Debian) [1]. Here is my Git repository for Amazfish itself [2].

These packages are currently using QT5 which is a good reason to not upload them now as the transition to QT6 is in progress. Patching them to work with QT6 (as the libnemodbus upstream is apparently not migrating to QT6 yet) shouldn’t be that difficult but is something that needs some care and communication to get it right.

Running this package on my laptop with my PineTime (which worked very reliably when run by GadgetBridge on Android) wasn’t reliable and the PineTime would disconnect and refuse to connect again. Doing it on the Furilabs FLX1s gave a similar result. If Amazfish was the only Bluetooth program having problems on my laptop and on my FLX1s then I’d blame it, but both those systems have some other Bluetooth issues.

Running this on my laptop Amazfish would send it’s own test notifications to my watch but system notifications (from notify-send among others) wouldn’t get sent. Running this on my FLX1s I got ONE notification from my network monitoring system sent to my watch before my phone and watch stopped talking to each other.

To make things even more difficult for me the harbour-amazfish-ui program doesn’t work correctly with the libraries installed on my FLX1s and doesn’t display the content of many screens but it works correctly when running in a container environment with stock Debian/Testing.

Below is the script that I’m currently using to launch apps in a Debian/Testing container on my FLX1s. The comment about unshare-user doesn’t apply to this version of the script but I left it in to avoid the potential for future confusion. The Furilabs people diverted the bwrap binary and have a wrapper that removes a set of parameters that they think will cause problems.

#!/bin/bash set -e BUILDBASE=/chroot/testing # bwrap: Can't mount proc on /newroot/proc: Device or resource busy # get the above with --unshare-user and --unshare-pid exec bwrap.real --bind /tmp /tmp --bind /run /run --bind $HOME $HOME --ro-bind $BUILDBASE/etc /etc --ro-bind $BUILDBASE/usr /usr --ro-bind $BUILDBASE/var/lib /var/lib --symlink usr/bin /bin --symlink usr/sbin /sbin --symlink usr/lib /lib --proc /proc --dev-bind /dev /dev --die-with-parent --new-session "$@"

Due to the range of problems I’m having I think it would be best to pass this package on to someone else who has a different test setup. It could be that further testing will reveal that my issues are related to bugs in Amazfish but I can’t prove it either way at this time. Maybe when using a smart watch other than a Pine Time it will work more reliably but it seems most likely that my laptop and phone are to blame. I can’t make more progress on this now.

Discussion of “AI” systems seems to be dominated by fears of uncommon and unlikely threats. I think that we should be focusing more on real issues with LLMs and with society in general and put the most effort towards the biggest problems.

True Artificial Intelligence [1] (IE a computer that has the mental capacity of a household pet) is something that I think can be developed, but it hasn’t been developed and we don’t have good plans for developing it. We seem to be a lot further away from achieving that goal than we were from landing on the moon in 1962 when JFK gave his historic speech.

What we have is a variety of pattern recognition systems that can predict what fits into a pattern. The most well known type of Machine Learning (ML) is the Large Language Model (LLM) which means ChatGPT and similar systems which predict which text would be likely to come next and can make an essay from it. They can give interesting and useful output, but there is no thought behind it, it’s just a better form of Eliza (the famous program from 1964 that simulates conversation by pattern matching) [2]. By analysing billions of documents, storing the data in a condensed mathematical way, and then using computation to extract from that record LLMs can produce output that is unfortunately considered by some people to be good enough to include in legal documents submitted to courts, university assignments, and many other documents. But they do so without even having the thinking ability of a mouse.

To call current systems “AIs” without any significant qualifiers when criticising them is to concede the debate about the worth of such things.

If we develop AIs that can actually think we will have to deal with the issues in the SciFi horror short story Lena by qntm [3].

Here is a list of some of the most unreasonable arguments I’ve seen against “AI” which distract attention from real problems both related to “AI” and other problems in society.

Wikipedia has a page listing Deaths Linked to Chatbots [4] which right now has 16 entries from 2023 to Feb 2026. They are all tragedies and as a society we should try to prevent such things. But what I would like to see from the media is some analysis of overall trends, yes it gets people’s attention when someone dies in an unusual way but we need to have attention paid to the more numerous deaths which are preventable. It has become a standard practice to give information on Lifeline in media referencing suicide, it would be good if they also developed a practice of mentioning the relative incidence of a problem when publishing an article about it.

One of the many factors that cause more suicides than chatbots is school, Scientific American has an informative article from 2022 about the correlation between child suicide and school [5]. It is based on US statistics and shows that the lowest suicide rate is in July (a no-school month in the US) which has a rate of 2.3 per 100,000 person years. So if kids had a quality of life equivalent to July all year around then there would be 2.3 suicides per 100,000 kids every year while if they had a quality of life equivalent to a Monday in January or November it would be 3.9 suicides per 100,000 kids every year. The article states “Any time I present these data to teachers, parents, principals or school administrators, they are shocked. This should be common knowledge.” It is common knowledge to anyone who takes any notice of what happens in schools, but paying attention to serious problems is unpleasant, it’s more fun to pretend that school is good for everyone. No parent wants to think that they sent their child to a place that was horrible, no teacher wants to think that they are part of a system that harms kids.

The US CDC has an informative article about youth suicide [6] which documents it as the 3rd largest cause of death in the 14-18 age range fro 2021. This article was published in 2024 and based on statistics from 2023 and earlier. It notes significant differences in suicides, attempts, and “persistent feelings of sadness or hopelessness” which had girls at more than twice the rate of boys and “LGBQ+” kids at more than twice the rate of “heterosexual” students. It seems obvious that misogyny and homophobia is correlated with suicide and that’s something that could and should be addressed in schools. My state has a Safer Schools program [7] to try and alleviate the problems related to homophobia, but I expect that things are getting worse in the US in that regard. 39.7% of kids in US high schools had “persistent feelings of sadness or hopelessness” before LLMs became popular, school could and should be a happy time for the vast majority of kids but instead almost half of the kids don’t enjoy it and a majority of girls and “LGBQ+” kids don’t. Having no mention of trans kids is a significant omission from that article, based on everything I’ve heard from trans people I expect that their statistics would be even worse.

One could argue that the small number of deaths inspired by use or misuse of LLMs is an indication of a larger number of people suffering in ways that don’t result in death and don’t get noticed. But I don’t think that can compare to the fact that the majority of girls and “LGBQ+” kids have “persistent feelings of sadness or hopelessness” in the current school system.

Regarding homicide, the Australian Institute of Criminology has an article showing that in the 2003-2004 time period 49% of women who were killed were listed as a “domestic argument” [8], that’s something that could and should be addressed. That article claimed 308 homicide victims in that time period which is larger than the world-wide death toll from LLMs but also less than 1/3 the death toll from car accidents in Australia. Australia has less than 0.4% of the world population, a fairly low homicide rate, and a number of homicides that vastly outnumbers all world homicides related to LLMs.

I think it’s great to address any cause of suicide or homicide, but devoting government resources and legislation towards very uncommon causes instead of things that happen every day is not a good strategy. It would be fine to address all factors leading to suicide, but problems with the school system have been a major factor for decades with little effort applied to fix it.

There is evidence of criminals using LLMs to help prepare for crimes, the ability to generate large amounts of text quickly can be used for fraud and extortion. This is going to be a serious problem and we need structural changes to society to deal with it. There is an ongoing issue of scammers convincing older people that their child or other young relative is in trouble and a large amount of cash is required to address it. This sort of scam as well as the more well known “Nigerian” scams will probably become more common as the cost of running them decreases. This may be more of a problem for people in developing countries as currently a common scam business model is to have people in regions where wages are low (such as Pakistan for one who I spoke to) scamming people in relatively wealthy countries like Australia so an attack with a low probability of success is financially viable. Cheaper attacks will make less affluent victims financially viable to the scammers.

While writing this post I received a financial scam phone call trying to get me to invest in SpaceX that was run by an “AI” chat system, I expect to receive more of them and this is something that needs to be dealt with via both technical measures and legislation.

Do we have to accept less freedom and less anonymity in finances as a cost of reducing financial crime? Greater restrictions on the use of cash would make some crimes more difficult or less profitable for criminals. As a society I think we need to have a discussion about a balance between financial freedom and freedom from criminal exploitation, failing to have such a discussion is likely to lead to policies which don’t work well.

Also one thing that ML systems are good at is recognising patterns in data. Banks could scan all their transactions and look for patterns that correlate with fraud. They currently do this badly and do things like locking credit cards when someone goes to another country and spends money. They could do a better job of that and involve the police in cases of obvious fraud even when the customer doesn’t realise that they are a victim.

This isn’t a reason to criticise “AIs”, it’s a reason to plan defensive technology that matches the capabilities of attackers.

As an aside I used to work for a company that was developing “AI” software to scan bank phone calls and allow banks to recognise employees who acted illegally. Unfortunately the Royal Commission into banking misconduct [9] didn’t impose any penalties that gave the banks a financial reason to avoid criminal activity.

There are many claims about AI systems making large numbers of jobs obsolete, some of them are outlandish such as the claims that all white-collar jobs will be obsolete in the near future. There are some reasonable claims like the ability to replace some mundane jobs.

Replacing jobs that suck with computers, robots, and other machinery is a good thing! Very few people wish that they were working on a farm without a tractor. In 1900 it’s estimated that between 60% and 70% of the world labour force worked in agriculture and 40% of the US labour force did so. Now it’s something like 27% globally and between 1% and 3% in developed countries. Automated factories are also a good thing, it’s best to avoid boring and dangerous work.

The most plausible claims about job replacement from “AI” is jobs that involve analysing and summarising documents. One example that comes to mind is the worst kind of journalism where press releases from companies are massaged into the format of a feature article. I don’t think anyone wants that sort of job and doing it with “AI” hopefully means no human has to sign their name to it.

For work like programming few people will be directly replaced by “AI” but if people can do their work more efficiently while using it then less people are required. I don’t think that any programmer likes the part of their job where they have to skim read long documents looking for a clue about how to solve a problem with a library or protocol. A LLM processing the document and finding the potentially useful things will take away the drudgery from the work and allow greater productivity.

The trend in replacing people has been making people work longer. If you force all employees to work 60 hour weeks then that can theoretically allow hiring fewer people than having 40 hour weeks. For some work that applies but for skilled work it mostly doesn’t as productivity and work quality on average drops when people work more than 40 hours in a week.

Another trend for exploiting people is having a low minimum wage and making accommodation expensive so that many people need to work two jobs. What we need is legislation to restore the situation in the 70s where a single full time job was sufficient to provide for a family. The low minimum wage and high expenses for many things is a problem that’s been slowly developing over the course of decades while being mostly ignored by journalists. If they could concentrate on the real issues that are hurting workers today they could incite political action to fix these problems.

There is no shortage of ways of cheating in school and university. There are people who are paid to write essays, mobile phones are used for cheating in exams, etc. Getting an “AI” to write essays makes it easier to cheat for the essay writing part but does so with lower quality and in a less stealthy way.

What’s the worst case scenario? That we have to change to oral exams for all university subjects?

In the US the average annual price for tuition at a university is apparently $25,000, if each student had individually supervised assessment for their exams at a cost of $100 per hour it would make the degree cost 4% more. The cost of university in the US is unreasonably high and that’s a problem that needs to be fixed, but a hypothetical case of increasing the price by 4% isn’t going to be a major part of it.

There have been many claims made that “AI” will break the security of all systems and cause the type of disruption that was previously predicted for year 2000. Bruce Schneier has written a good analysis of the issues including how “AI” can be used by both attackers and defenders [10], he doesn’t have a strong conclusion on whether the net result will be good or bad but his article does make it clear that the result is not going to be a total disaster.

While I was working on this post I read another post by Bruce Schneier that was significantly more negative about this issue [11]. While I still don’t think this will destroy civilisation I found his other post convincing enough to move computer security from the bad argument section to the weak argument section.

There are issues of bots from “AI” companies doing a bad job of trying to download all the Internet’s content and using a lot of resources. When it was just the major search engines and the Wayback Machine doing it the load was small due to having a small number of organisations that were very good at the way they did it having evolved practices over many years. Now we have a lot of idiots doing it badly and repeatedly hitting generated content.

This is really annoying but is something that we can deal with. Currently my blog and many other sites are hosted on a Hetzner server with a E3-1271 v3 CPU with 32G of RAM and there are occasions where more than half the CPU power is being used to service web requests from such systems. Even on the “server bidding” (renting servers previously used by other customers) Hetzner isn’t offering systems so slow nowadays, the slowest they offer is about 20% faster than that. This is something that can be dealt with by spending a little more on hosting until the companies doing that go bankrupt.

I’m sure this is a serious problem for some people, but for most people it’s not a big deal. Also hostile traffic on the Internet is something we have all had to deal with as a part of life since the mid to late 90s.

The unreasonably high prices for RAM are annoying and hurt the development of useful computer projects. Big companies can afford it, even with current high prices and large quantities of RAM used for some servers it’s still not significant. But it is a major issue for hobbyists and small projects. Things like setting up a dozen test VMs for FOSS development are now too expensive for many people who develop software in their spare time.

But this is a temporary thing, if AI companies were to keep buying RAM at high rates for a few years companies would just manufacture more of it to meet demand. In some situations capitalism can work.

There are many people claiming that power used by data centers for “AI” will lead to environmental damage, using power and water when there isn’t enough.

The trend of computer hardware is to get smaller and faster. It hasn’t been going as fast as it used to in many areas but it hasn’t stopped either and it’s an exponential trend. There has been an increase in data centers (DCs) for “AI” use as the use has been increasing faster than the hardware gets smaller. Eventually they will stop increasing faster than advances in hardware and software can match and the size of DCs will decrease.

As the production of renewable energy is increases the environmental cost of energy hungry industries decreases. In a few years this won’t be an issue anyone is bothered about.

Jamie McClelland makes an interesting claim that the AI companies are pushing dangers of “AI” as a method of PR [12]. That seems plausible and combined with the tendency of many journalists to just massage press releases from companies into articles could be the reason for a lot of the bad arguments against AI.

I’ve previously written about Communication and Hostile AIs [13]. I think that filling all communication channels with rubbish is a denial of service attack against society.

In the past communication took some effort, even the simplest email that was directly targeted at the recipient took some human effort and that reduced it’s frequency. I get a lot of spam saying something like “I see your web site doesn’t rank in the top for Google searches” while my web site in fact rates well and the actor named Russell Coker is ranking below me, so I know that such spam hasn’t had the minimum of human involvement. Now a spammer who wanted to do a better job could get an LLM written spam for every target so the message was specifically aimed at them and would take much longer to be recognised by a human as spam and would also avoid most anti-spam software.

Searching for businesses used to be easy, the phone book had listings for them and there was a real cost to being in the book as well as humans actively trying to stop fraud. Creating fake web sites to get business isn’t too difficult but it’s also not trivial at the moment and such fake sites won’t look complete. Now with LLMs it’s possible to create hundreds of sites that have content and look reasonable without human involvement. Instead of the small number of suicides and homicides inspired by “AI” chat systems we should probably be concerned about people who need psychological or medical advice being misled by bogus web sites created as part of fraud campaigns. Imagine people searching for mental health assistance finding web sites run by cults who oppose psychology as a profession. Imagine people searching for basic medical advice such as how to cook a healthy meal getting sucked in to web sites that start sane and then lead people to Ivermectin as a universal medicine.

LLMs have the potential to take spam from quick and simple attacks to large scale targeted fraud aimed at people and organisations that don’t have the resources to defend against it. There have been many reports of CEO impersonation fraud against major corporations aiming to steal hundreds of thousands of dollars and fraud against individuals who are persuaded to get amounts like $50,000 to help a relative who is allegedly in a difficult situation. But if every corner store experienced the same type of attack that CEOs experience and if every child had someone trying to steal the pocket money in the same way that relatively wealthy people are being targeted now it would really change things.

There is some overlap between filling all communications channels with rubbish (fake news etc) and deep fake. Making a fake photo of a politician or celebrity to lobby for legislative changes is a real issue but it’s not what most people think of when the term “deep fake” is used.

Using photo and video faking targeting non-consenting people is a serious issue. It’s not just fake porn (which is a major issue and will cause some suicides) as there are many other possibilities. Fake videos showing behaviour that justifies sacking people from their jobs is going to become an issue, for people in public facing positions even proof that the videos are fake won’t necessarily help them.

Will we find ourselves in a situation where every politician gets deep-fake porn made of them and the only people who run for public office are ones who are cool with that? Will positions of leadership in the technology industry be restricted to people who aren’t bothered by having the most depraved fake porn made of them?

We have seen a lot of evidence of law enforcement and the court system based on bias leading to bad results. The Innocence Project attempts to correct that and it’s web site documents some of the things that have gone wrong [15]. Using “AI” systems to do some of the work of law enforcement by training computers on the flawed results of current systems can entrench bias and also make it harder to spot.

When determining whether someone should be considered a suspect or whether a prisoner should be eligible for parole the number of factors that a human can use is limited. But a computer can take many more factors into account so the issues of whether inappropriate factors are being used can be masked. Computers are also unable to explain decisions that they made and are also able to come up with better fake reasons.

In the past there have been racist policies in the US about banks not lending to people living in suburbs where most houses were owned by non-white people, these policies were documented and the documents have become part of the historical record showing racist policies. If a LLM decides not to lend money to people based on mathematical correlations it determined based on historical banking practices it could assign negative weights to factors such as non-English names and implement the racism in a large array of numbers with no proof.

The current cases of lawyers getting LLM systems to do some of their work and having their incompetence revealed when the computer generated work is shown to be ridiculously bad are amusing. But that is not the real problem. The real problems will start when the computers in police cars start flagging every car owned by a non-write person as having a “probable cause” for a drug stop.

The majority of the ecosystem around “AI” is a financial scam [16]. There are companies and individuals doing good things with machine learning some of which is based on hardware and software developed as part of this ecosystem. But the majority of it has no plausible path to profits and a the future of it inevitably ends with some bankruptcies. There are circular flows of money that have the major cloud providers and NVidia looped in, when the values of these companies correct it will become apparent that they have all burned a lot of money keeping this running and all the senior people have got a share of it (the entire purpose of stock options is to allow senior people to suck money out of the company). Then every cloud provider will increase costs while under chapter 11 and all the companies that depend on them will pay whatever it takes. That includes all major companies and most governments. Unlike the dot-com boom and crash and the housing crash the coming financial crash will impact every company that we deal with and most governments. So the people in first-world countries will effectively be taxed to pay for this scam while the executives go party in Monaco. This may seem like an extreme claim but it all happened before with the dot com crash and the housing market crash.

The CEO class has an ongoing practice of doing things that aren’t crimes because they lobby (bribe) politicians to make them legal. So the current stock market shenanigans around “AI” don’t seem to involve things that governments consider to be crimes. But any normal person might be surprised to learn that such things are legal and most people would vote for such things to be crimes if they had the opportunity.